Quick Answer: Your digital avatar—the behavioral fingerprint built from your clicks, pauses, purchases, and patterns—often predicts your decisions before you consciously make them. Synthetic identity systems aggregate this data into a model that can be more consistent, less biased, and eerily more "you" than your own self-perception. The implications for trust, privacy, and agency are profound.

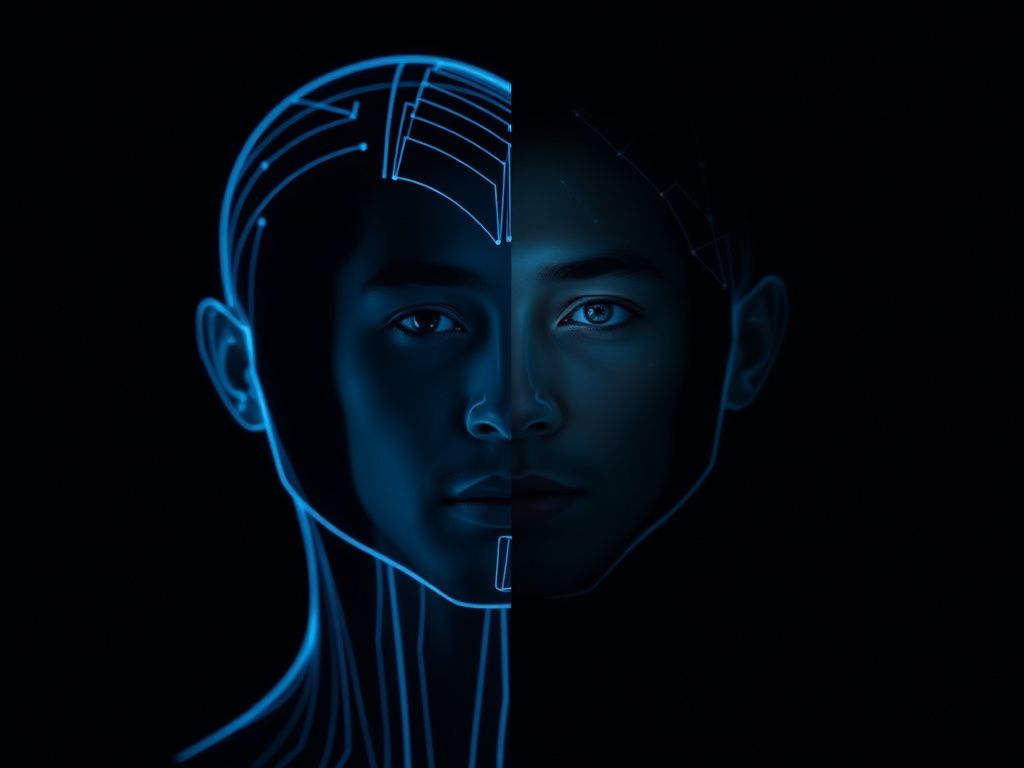

There's a quiet experiment running on you right now. Every app you open, every search query you abandon halfway through, every product you hover over for three seconds before closing the tab—all of it feeds a machine that is assembling a version of you. Not a caricature. A high-fidelity behavioral model. A synthetic identity.

The disturbing part isn't that this model exists. It's that in several measurable dimensions, it may be more accurate about you than you are.

What Is a Synthetic Identity (And Why It's Not What You Think)

Most people hear "synthetic identity" and think fraud—stolen SSNs, fabricated personas, credit card scams. That's one use of the term. But in the broader field of computational psychology and behavioral AI, a synthetic identity refers to the composite digital model constructed from your behavioral, transactional, and social data.

Think of it as a behavioral clone, assembled from:

- Clickstream data — where your cursor moves, how long you hesitate before clicking

- Temporal patterns — when you shop, when you read, when you make impulsive decisions (hint: usually after 10 PM)

- Linguistic fingerprints — word choice, sentence length, emotional tone across messages and posts

- Purchase and consumption sequences — what you buy after a stressful week versus a calm one

- Social graph signals — who influences your decisions without you realizing it

Platforms like Netflix, Spotify, and Amazon don't just use this to recommend content. They use it to model your future states. Your synthetic identity isn't just a record of who you were. It's a probabilistic projection of who you're about to be.

The Psychology Gap: Why You're a Worse Judge of Yourself Than an Algorithm

Humans carry a staggering cognitive load of biases. You engage in self-serving attribution (your successes are skill; your failures are circumstance). You fall victim to present bias, dramatically overvaluing immediate rewards over future ones. You reconstruct memories rather than replay them.

Your synthetic identity, by contrast, doesn't lie to itself.

A 2020 study from Stanford's Computational Social Science Lab found that AI models trained on Facebook Likes predicted personality traits more accurately than the users' own self-assessments—and in some dimensions, more accurately than their spouses. The model had processed 300 Likes. Your spouse has processed years of lived experience. The algorithm won.

This isn't a parlor trick. It reflects a fundamental asymmetry:

You experience your life from the inside, filtered through ego, emotion, and narrative. The model experiences you from the outside, filtered through nothing except statistical regularity.

That asymmetry has practical consequences everywhere—from the ads that convert you to the insurance premiums you pay to the job applications that get auto-screened out.

How Trust Gets Inverted

Here's the philosophical gut-punch: if your synthetic identity predicts your behavior more accurately than you predict your own behavior, then systems built on that model can make better decisions about you than you make about yourself—at least in narrow, well-defined domains.

Credit scoring is the oldest example. Your FICO score is essentially a synthetic identity fragment. And study after study shows that algorithmic credit decisions, while systemically biased in some populations, are less biased than human loan officers making subjective calls. The algorithm doesn't care that you remind the loan officer of his unreliable brother.

The real trust inversion happens when you start asking: who does my synthetic identity serve?

- It serves you when Netflix's model saves you from wasting an hour on a film you'd hate.

- It serves a corporation when that same model identifies your emotional vulnerability window and serves a targeted ad during it.

- It serves a state when behavioral scoring systems (think China's Social Credit System, or the predictive policing algorithms used in the US) use your synthetic identity to constrain your options before you've acted.

The model is neutral. The deployment is not.