The democratization of synthetic biology, once heralded as the dawn of a "Third Industrial Revolution," has hit a grim, operational wall in 2026. As the infrastructure for distributed biological research moves from centralized, high-security facilities to a sprawling, decentralized network of cloud-integrated laboratories, the assumptions of 2020-era biosecurity have largely collapsed. The 2026 Vulnerability Report—an aggregated dataset compiled by independent watchdog consortiums and leaked internal audit logs—paints a picture of a sector struggling to manage the existential risks posed by its own scalability.

The problem is no longer about a "mad scientist" in a basement; it is about the mundane, systemic failure of API-driven, automated synthesis platforms that now underpin global biotech manufacturing.

The Erosion of Physical Gating

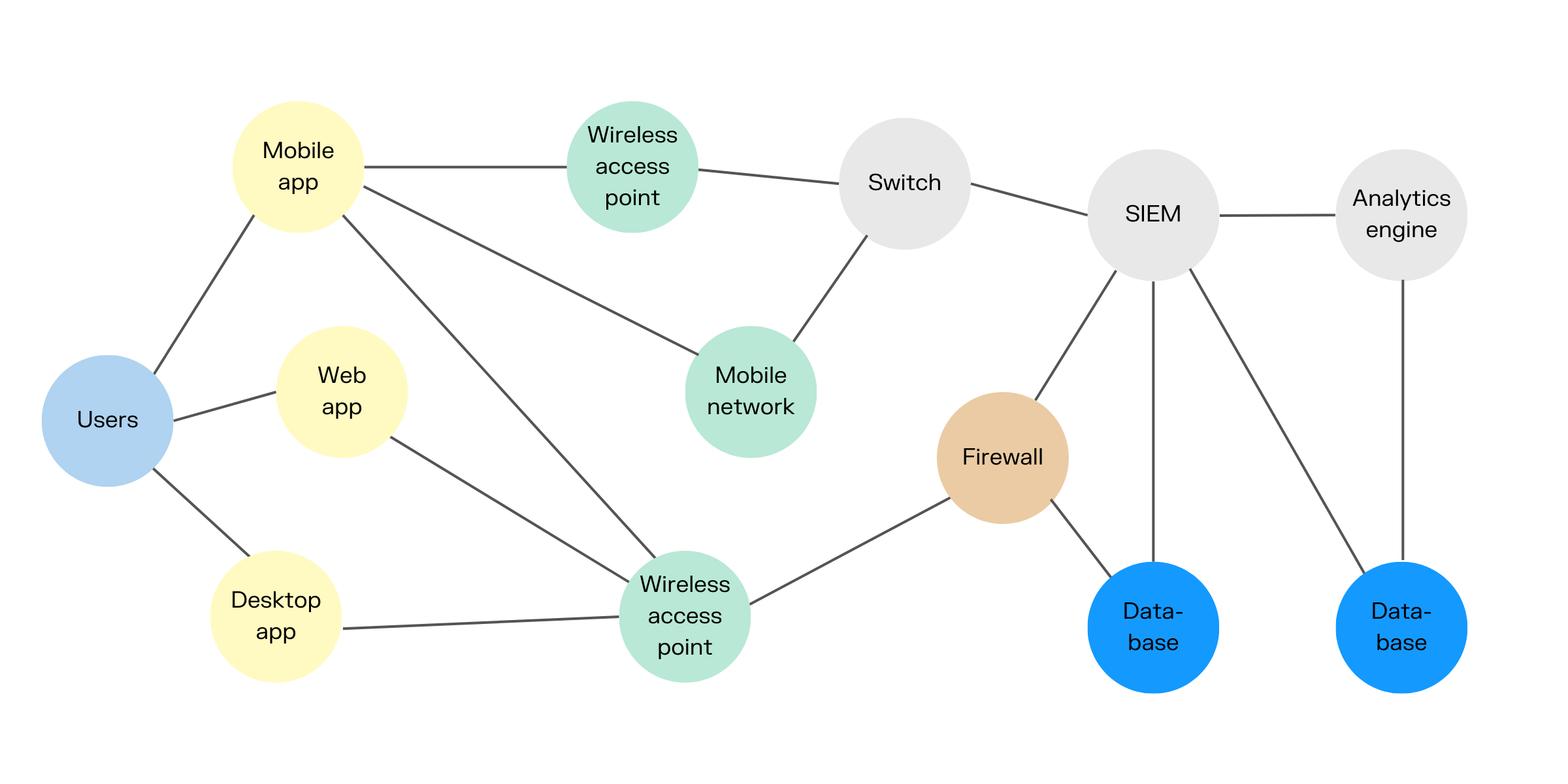

For decades, biosecurity was physical. It relied on badge readers, heavy-duty air filtration, and a select group of authorized personnel. Today, that physical barrier has been rendered nearly irrelevant by the "Lab-in-the-Cloud" model. Researchers now submit DNA sequences via encrypted API calls to contract research organizations (CROs) that possess proprietary synthesis hardware.

The security bottleneck has shifted from the laboratory door to the cloud-native middleware that connects the researcher's design software to the synthesizer's firmware, echoing the technical complexities found when you Build and Sell AI Browser Extensions for a 5x Profit in 2026. In 2026, the vulnerability isn't just a physical breach; it is an "upstream injection." If an attacker compromises the authentication token of a legitimate research account at a major synthetic biology platform—such as the widely used "HelixSync" middleware—they can bypass the automated sequence screening protocols that are supposed to catch known pathogens.

"We saw it in the GitHub issues for the OpenBio-API back in early 2026," says Marcus Thorne, a pseudonym for a cybersecurity researcher who monitors biosecurity threads on darknet forums. "Developers were prioritizing low latency for automated synthesis orders. They implemented a tiered screening process where 'verified' institutional partners got faster turnarounds. The bypass wasn't a complex hack; it was an exploit of the 'expedited review' whitelist. If you mirror the metadata structure of a top-tier university, the screening algorithm effectively skips the deep-sequence analysis."

The "Shadow Lab" Ecosystem

The most dangerous reality of 2026 is the growth of the "Shadow Lab" ecosystem. Much like the dark web marketplaces of the previous decade, these platforms facilitate the synthesis of sequences that trigger automated red flags on legitimate commercial platforms.

The industry refers to this as "protocol drift." When commercial synthesizers harden their screening logic, researchers—or those with malicious intent—shift to decentralized, open-source hardware that does not enforce sequence screening by design. This is not a policy failure; it is a fundamental design choice. The open-source bio-hardware community, largely thriving on platforms like GitLab and niche Discord servers, argues that strict, hard-coded screening prevents legitimate legitimate innovation.

"If we build in a 'kill-switch' or a mandatory screening layer, we effectively hand the keys to our research infrastructure to whichever corporate entity happens to own the cloud middleware," one maintainer of a popular open-source sequencer wrote in a public thread. "We aren't creating biosecurity risks; we are creating a sandbox for independent science."

Scaling Failures and Operational Friction

The operational reality is far messier than the glossy brochures of biotech startups suggest, revealing that even in high-tech fields, success often mirrors the challenges of those who Build a $20k/Month AI Automation Agency Without Hiring Full-Time Staff. In 2026, the scaling of distributed labs has created a "Support Nightmare." A common thread on Reddit’s /r/biohackers or technical support channels for industrial lab automation shows a recurring pattern: hardware timeouts caused by firmware bugs that occur during the final stages of a synthesis run.

When these systems fail—which they do with surprising frequency—they often fail in an unrecoverable, "dirty" state. In one incident in late 2025, a laboratory in Europe attempted to automate the production of a bespoke protein scaffold. A kernel panic in the local control unit occurred exactly when the system was supposed to initiate the cleaning cycle for the DNA synthesizer. The result was a contaminated unit that remained offline for three weeks, while the local IT team struggled to find a remote support technician who could access the proprietary firmware.

This "broken-pipe" problem is endemic. Because the labor force managing these labs is increasingly decentralized and less specialized, when a device hits an "edge-case" error, it sits there, useless and potentially biologically active.

The Illusion of Algorithmic Safety

The industry has spent billions on "Screening AI"—algorithms that cross-reference submitted DNA sequences against databases of known pathogens. But the 2026 Report indicates that these databases are lagging behind real-world discoveries.

"The fundamental problem is that the database of 'dangerous' sequences is static, while the science of synthetic biology is hyper-dynamic," notes Dr. Elena Vance, a former consultant for a major bioinformatics firm. "You can’t just blacklist a string of genetic code. Functionality is context-dependent. A sequence might be harmless in one frame of reference and highly toxic in another. The algorithms are looking for signatures, not functions."

This is where the "Workaround Culture" takes over. Researchers, faced with automated rejections for legitimate, potentially sensitive research, have begun to adopt obfuscation techniques. They chop sequences into smaller segments, introduce "nonsense" junk data to scramble the matching algorithms, and reassemble the sequences in their own local, unregulated lab environments. This manual reassembly process significantly increases the risk of contamination and lab accidents.

Case Study: The "Sync-Breach" of July 2026

In July 2026, a series of unauthorized synthesis orders placed via a compromised research account triggered an industry-wide panic. The attacker had managed to inject an obfuscated, potentially harmful genetic sequence into a legitimate, large-scale order of synthetic DNA. Because the order was tied to an account with a long-standing reputation for high-volume, standard research, the automated flagging systems treated it as a "high-trust" event.